项目介绍

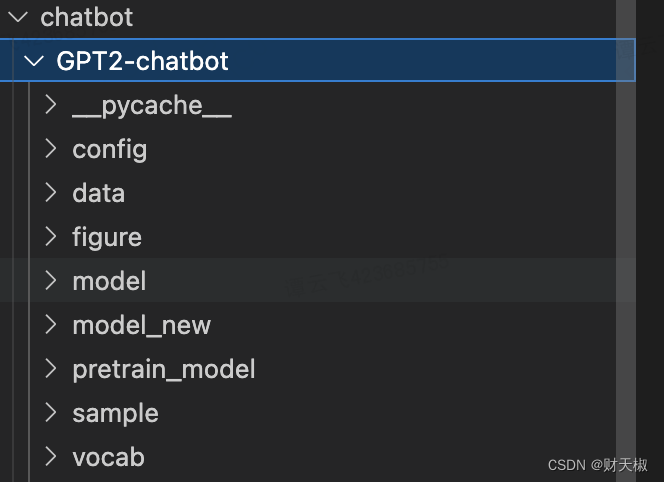

- 整体的目录如下所示:

- 上述的项目结构中出了model是必须的外,其他的都可以根据训练的代码参数传入进行调整,有些不需要一定存在

- data

- train.pkl:对原始训练语料进行tokenize之后的文件,存储一个list对象,list的每条数据表示一个多轮对话,表示一条训练数据

- model:存放对话生成的模型

- config.json:模型参数的配置文件

- pytorch_model.bin:模型文件 - vocab

- vocab.txt:字典文件。默认的字典大小为13317,若需要使用自定义字典,需要将confog.json文件中的vocab_size字段设为相应的大小。

- sample:存放人机闲聊生成的历史聊天记录

- train.py:训练代码

- interact.py:人机交互代码

- preprocess.py:数据预处理代码

项目的整体运行流程

- 第一步:数据模块, 根据后面的数据集地址介绍进行数据集的下载,里面有各个地方的数据集来源以及数据合并的代码

- 第二步:将得到的数据通过preprocess.py文件进行训练数据处理,得到train.pkl文件,得到后再整个项目目录下创建data文件夹,并将得到的train.pkl文件移动到data文件夹下面

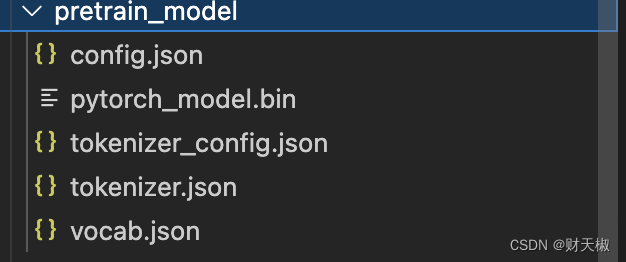

- 第三步:去huggingface网站上面下载gpt2预训练模型下的文件,具体需要下载的文件如下所示:

- 第四步:运行train.py文件训练得到再收集的数据集上面的微调模型

- 第五步:通过chatbot.py对模型进行人机交互和推理

数据集地址

- https://github.com/codemayq/chinese-chatbot-corpus, 安装上面的readme运行就可以得到相应的数据集

- 然后再运行上面的preprocess.py就可以得到相关的训练数据集train.pkl

训练代码train.py

import argparse

import math

import time

import torch

import torch.nn.functional as F

import torch.optim as optim

import logging

from datetime import datetime

import os

from torch.utils.data import Dataset, DataLoader

from os.path import join, exists

from torch.nn import CrossEntropyLoss

from tqdm import tqdm

from torch.nn import DataParallel

import transformers

import pickle

import sys

from pytorchtools import EarlyStopping

from sklearn.model_selection import train_test_split

from data_parallel import BalancedDataParallel

from transformers import GPT2TokenizerFast, GPT2LMHeadModel, GPT2Config

from transformers import BertTokenizerFast

import pandas as pd

import torch.nn.utils.rnn as rnn_utils

import numpy as np

from dataset import MyDatasetdef set_args():parser = argparse.ArgumentParser()parser.add_argument('--device', default='3', type=str, required=False, help='设置使用哪些显卡')parser.add_argument('--no_cuda', action='store_true', help='不使用GPU进行训练')parser.add_argument('--vocab_path', default='vocab/vocab.txt', type=str, required=False,help='词表路径')parser.add_argument('--model_config', default='config/config.json', type=str, required=False,help='设置模型参数')parser.add_argument('--train_path', default='data/train.pkl', type=str, required=False, help='训练集路径')parser.add_argument('--max_len', default=150, type=int, required=False, help='训练时,输入数据的最大长度')parser.add_argument('--log_path', default='data/train.log', type=str, required=False, help='训练日志存放位置')parser.add_argument('--log', default=True, help="是否记录日志")parser.add_argument('--ignore_index', default=-100, type=int, required=False, help='对于ignore_index的label token不计算梯度')parser.add_argument('--epochs', default=20, type=int, required=False, help='训练的最大轮次')parser.add_argument('--batch_size', default=64, type=int, required=False, help='训练的batch size')parser.add_argument('--gpu0_bsz', default=10, type=int, required=False, help='0号卡的batch size')parser.add_argument('--lr', default=2.6e-5, type=float, required=False, help='学习率')parser.add_argument('--eps', default=1.0e-09, type=float, required=False, help='衰减率')parser.add_argument('--log_step', default=1, type=int, required=False, help='多少步汇报一次loss')parser.add_argument('--gradient_accumulation_steps', default=4, type=int, required=False, help='梯度积累')parser.add_argument('--max_grad_norm', default=2.0, type=float, required=False)parser.add_argument('--save_model_path', default='model_new', type=str, required=False,help='模型输出路径')parser.add_argument('--pretrained_model', default='./pretrained_model', type=str, required=False,help='预训练的模型的路径')parser.add_argument('--num_workers', type=int, default=0, help="dataloader加载数据时使用的线程数量")parser.add_argument('--patience', type=int, default=0, help="用于early stopping,设为0时,不进行early stopping.early stop得到的模型的生成效果不一定会更好。")parser.add_argument('--warmup_steps', type=int, default=4000, help='warm up步数')parser.add_argument('--val_num', type=int, default=8000, help='验证集大小')args = parser.parse_args()return argsdef create_logger(args):"""将日志输出到日志文件和控制台"""logger = logging.getLogger(__name__)logger.setLevel(logging.INFO)formatter = logging.Formatter('%(asctime)s - %(levelname)s - %(message)s')file_handler = logging.FileHandler(filename=args.log_path)file_handler.setFormatter(formatter)file_handler.setLevel(logging.INFO)logger.addHandler(file_handler)console = logging.StreamHandler()console.setLevel(logging.DEBUG)console.setFormatter(formatter)logger.addHandler(console)return loggerdef collate_fn(batch):input_ids = rnn_utils.pad_sequence(batch, batch_first=True, padding_value=0)labels = rnn_utils.pad_sequence(batch, batch_first=True, padding_value=-100)return input_ids, labels

def load_dataset(logger, args):"""加载训练集和验证集"""logger.info("loading training dataset and validating dataset")train_path = args.train_pathwith open(train_path, "rb") as f:input_list = pickle.load(f)val_num = args.val_numinput_list_train = input_list[val_num:]input_list_val = input_list[:val_num]train_dataset = MyDataset(input_list_train, args.max_len)val_dataset = MyDataset(input_list_val, args.max_len)return train_dataset, val_datasetdef train_epoch(model, train_dataloader, optimizer, scheduler, logger,epoch, args):model.train()device = args.deviceignore_index = args.ignore_indexepoch_start_time = datetime.now()total_loss = 0 epoch_correct_num, epoch_total_num = 0, 0for batch_idx, (input_ids, labels) in enumerate(train_dataloader):try:input_ids = input_ids.to(device)labels = labels.to(device)outputs = model.forward(input_ids, labels=labels)logits = outputs.logitsloss = outputs.lossloss = loss.mean()batch_correct_num, batch_total_num = calculate_acc(logits, labels, ignore_index=ignore_index)epoch_correct_num += batch_correct_numepoch_total_num += batch_total_numbatch_acc = batch_correct_num / batch_total_numtotal_loss += loss.item()if args.gradient_accumulation_steps > 1:loss = loss / args.gradient_accumulation_stepsloss.backward()torch.nn.utils.clip_grad_norm_(model.parameters(), args.max_grad_norm)if (batch_idx + 1) % args.gradient_accumulation_steps == 0:optimizer.step()scheduler.step()optimizer.zero_grad()if (batch_idx + 1) % args.log_step == 0:logger.info("batch {} of epoch {}, loss {}, batch_acc {}, lr {}".format(batch_idx + 1, epoch + 1, loss.item() * args.gradient_accumulation_steps, batch_acc, scheduler.get_lr()))del input_ids, outputsexcept RuntimeError as exception:if "out of memory" in str(exception):logger.info("WARNING: ran out of memory")if hasattr(torch.cuda, 'empty_cache'):torch.cuda.empty_cache()else:logger.info(str(exception))raise exceptionepoch_mean_loss = total_loss / len(train_dataloader)epoch_mean_acc = epoch_correct_num / epoch_total_numlogger.info("epoch {}: loss {}, predict_acc {}".format(epoch + 1, epoch_mean_loss, epoch_mean_acc))logger.info('saving model for epoch {}'.format(epoch + 1))model_path = join(args.save_model_path, 'epoch{}'.format(epoch + 1))if not os.path.exists(model_path):os.mkdir(model_path)model_to_save = model.module if hasattr(model, 'module') else modelmodel_to_save.save_pretrained(model_path)logger.info('epoch {} finished'.format(epoch + 1))epoch_finish_time = datetime.now()logger.info('time for one epoch: {}'.format(epoch_finish_time - epoch_start_time))return epoch_mean_lossdef validate_epoch(model, validate_dataloader, logger, epoch, args):logger.info("start validating")model.eval()device = args.deviceignore_index = args.ignore_indexepoch_start_time = datetime.now()total_loss = 0try:with torch.no_grad():for batch_idx, (input_ids, labels) in enumerate(validate_dataloader):input_ids = input_ids.to(device)labels = labels.to(device)outputs = model.forward(input_ids, labels=labels)logits = outputs.logitsloss = outputs.lossloss = loss.mean()total_loss += loss.item()del input_ids, outputsepoch_mean_loss = total_loss / len(validate_dataloader)logger.info("validate epoch {}: loss {}".format(epoch+1, epoch_mean_loss))epoch_finish_time = datetime.now()logger.info('time for validating one epoch: {}'.format(epoch_finish_time - epoch_start_time))return epoch_mean_lossexcept RuntimeError as exception:if "out of memory" in str(exception):logger.info("WARNING: ran out of memory")if hasattr(torch.cuda, 'empty_cache'):torch.cuda.empty_cache()else:logger.info(str(exception))raise exceptiondef train(model, logger, train_dataset, validate_dataset, args):train_dataloader = DataLoader(train_dataset, batch_size=args.batch_size, shuffle=True, num_workers=args.num_workers, collate_fn=collate_fn,drop_last=True)validate_dataloader = DataLoader(validate_dataset, batch_size=args.batch_size, shuffle=True,num_workers=args.num_workers, collate_fn=collate_fn, drop_last=True)early_stopping = EarlyStopping(args.patience, verbose=True, save_path=args.save_model_path)t_total = len(train_dataloader) // args.gradient_accumulation_steps * args.epochsoptimizer = transformers.AdamW(model.parameters(), lr=args.lr, eps=args.eps)scheduler = transformers.get_linear_schedule_with_warmup(optimizer, num_warmup_steps=args.warmup_steps, num_training_steps=t_total)logger.info('starting training')train_losses, validate_losses = [], []best_val_loss = 10000for epoch in range(args.epochs):train_loss = train_epoch(model=model, train_dataloader=train_dataloader,optimizer=optimizer, scheduler=scheduler,logger=logger, epoch=epoch, args=args)train_losses.append(train_loss)validate_loss = validate_epoch(model=model, validate_dataloader=validate_dataloader,logger=logger, epoch=epoch, args=args)validate_losses.append(validate_loss)if validate_loss < best_val_loss:best_val_loss = validate_losslogger.info('saving current best model for epoch {}'.format(epoch + 1))model_path = join(args.save_model_path, 'min_ppl_model'.format(epoch + 1))if not os.path.exists(model_path):os.mkdir(model_path)model_to_save = model.module if hasattr(model, 'module') else modelmodel_to_save.save_pretrained(model_path)if args.patience == 0:continueearly_stopping(validate_loss, model)if early_stopping.early_stop:logger.info("Early stopping")breaklogger.info('training finished')logger.info("train_losses:{}".format(train_losses))logger.info("validate_losses:{}".format(validate_losses))def caculate_loss(logit, target, pad_idx, smoothing=True):if smoothing:logit = logit[..., :-1, :].contiguous().view(-1, logit.size(2))target = target[..., 1:].contiguous().view(-1)eps = 0.1n_class = logit.size(-1)one_hot = torch.zeros_like(logit).scatter(1, target.view(-1, 1), 1)one_hot = one_hot * (1 - eps) + (1 - one_hot) * eps / (n_class - 1)log_prb = F.log_softmax(logit, dim=1)non_pad_mask = target.ne(pad_idx)loss = -(one_hot * log_prb).sum(dim=1)loss = loss.masked_select(non_pad_mask).mean() else:logit = logit[..., :-1, :].contiguous().view(-1, logit.size(-1))labels = target[..., 1:].contiguous().view(-1)loss = F.cross_entropy(logit, labels, ignore_index=pad_idx)return lossdef calculate_acc(logit, labels, ignore_index=-100):logit = logit[..., :-1, :].contiguous().view(-1, logit.size(-1))labels = labels[..., 1:].contiguous().view(-1)_, logit = logit.max(dim=-1) non_pad_mask = labels.ne(ignore_index)n_correct = logit.eq(labels).masked_select(non_pad_mask).sum().item()n_word = non_pad_mask.sum().item()return n_correct, n_worddef main():args = set_args()os.environ["CUDA_VISIBLE_DEVICES"] = args.deviceargs.cuda = not args.no_cudaif args.batch_size < 2048 and args.warmup_steps <= 4000:print('[Warning] The warmup steps may be not enough.\n' \'(sz_b, warmup) = (2048, 4000) is the official setting.\n' \'Using smaller batch w/o longer warmup may cause ' \'the warmup stage ends with only little data trained.')logger = create_logger(args)args.cuda = torch.cuda.is_available() and not args.no_cudadevice = 'cuda:0' if args.cuda else 'cpu'args.device = devicelogger.info('using device:{}'.format(device))tokenizer = BertTokenizerFast(vocab_file=args.vocab_path, sep_token="[SEP]", pad_token="[PAD]", cls_token="[CLS]")args.sep_id = tokenizer.sep_token_idargs.pad_id = tokenizer.pad_token_idargs.cls_id = tokenizer.cls_token_idif not os.path.exists(args.save_model_path):os.mkdir(args.save_model_path)if args.pretrained_model: model = GPT2LMHeadModel.from_pretrained(args.pretrained_model)else: model_config = GPT2Config.from_json_file(args.model_config)model = GPT2LMHeadModel(config=model_config)model = model.to(device)logger.info('model config:\n{}'.format(model.config.to_json_string()))assert model.config.vocab_size == tokenizer.vocab_sizeif args.cuda and torch.cuda.device_count() > 1:model = DataParallel(model).cuda()logger.info("use GPU {} to train".format(args.device))num_parameters = 0parameters = model.parameters()for parameter in parameters:num_parameters += parameter.numel()logger.info('number of model parameters: {}'.format(num_parameters))logger.info("args:{}".format(args))train_dataset, validate_dataset = load_dataset(logger, args)train(model, logger, train_dataset, validate_dataset, args)if __name__ == '__main__':main()

dataset.py文件

from torch.utils.data import Dataset

import torch

class MyDataset(Dataset):""""""def __init__(self, input_list, max_len):self.input_list = input_listself.max_len = max_lendef __getitem__(self, index):input_ids = self.input_list[index]input_ids = input_ids[:self.max_len]input_ids = torch.tensor(input_ids, dtype=torch.long)return input_idsdef __len__(self):return len(self.input_list)

训练数据处理代码preprocess.py

from tokenizers import BertWordPieceTokenizer

from transformers import BertTokenizer

from transformers import BertTokenizerFast

import argparse

import pandas as pd

import pickle

from tqdm import tqdm

from transformers import GPT2TokenizerFast, GPT2LMHeadModel

import logging

import numpy as npdef create_logger(log_path):"""将日志输出到日志文件和控制台"""logger = logging.getLogger(__name__)logger.setLevel(logging.INFO)formatter = logging.Formatter('%(asctime)s - %(levelname)s - %(message)s')file_handler = logging.FileHandler(filename=log_path)file_handler.setFormatter(formatter)file_handler.setLevel(logging.INFO)logger.addHandler(file_handler)console = logging.StreamHandler()console.setLevel(logging.DEBUG)console.setFormatter(formatter)logger.addHandler(console)return loggerdef preprocess():"""对原始语料进行tokenize,将每段对话处理成如下形式:"[CLS]utterance1[SEP]utterance2[SEP]utterance3[SEP]""""parser = argparse.ArgumentParser()parser.add_argument('--vocab_path', default='vocab/vocab.txt', type=str, required=False,help='词表路径')parser.add_argument('--log_path', default='data/preprocess.log', type=str, required=False, help='训练日志存放位置')parser.add_argument('--train_path', default='50w_qa_data', type=str, required=False, help='训练日志存放位置')parser.add_argument('--save_path', default='data/train.pkl', type=str, required=False, help='tokenize的训练数据集')args = parser.parse_args()logger = create_logger(args.log_path)tokenizer = BertTokenizerFast(vocab_file=args.vocab_path, sep_token="[SEP]", pad_token="[PAD]", cls_token="[CLS]")sep_id = tokenizer.sep_token_idcls_id = tokenizer.cls_token_idlogger.info("preprocessing data,data path:{}, save path:{}".format(args.train_path, args.save_path))with open(args.train_path, 'rb') as f:data = f.read().decode("utf-8")if "\r\n" in data:train_data = data.split("\r\n\r\n")else:train_data = data.split("\n")logger.info("there are {} dialogue in dataset".format(len(train_data)))dialogue_len = [] dialogue_list = []with open(args.save_path, "w", encoding="utf-8") as f:for index, dialogue in enumerate(tqdm(train_data)):if "\r\n" in data:utterances = dialogue.split("\r\n")else:utterances = dialogue.split("\t")input_ids = [cls_id] for utterance in utterances:input_ids += tokenizer.encode(utterance, add_special_tokens=False)input_ids.append(sep_id) dialogue_len.append(len(input_ids))dialogue_list.append(input_ids)with open(args.save_path, "wb") as f:pickle.dump(dialogue_list, f)if __name__ == '__main__':preprocess()