打卡day34

知识点回归:

- CPU性能的查看:看架构代际、核心数、线程数

- GPU性能的查看:看显存、看级别、看架构代际

- GPU训练的方法:数据和模型移动到GPU device上

- 类的call方法:为什么定义前向传播时可以直接写作self.fc1(x)

ps:在训练过程中可以在命令行输入nvida-smi查看显存占用情况

作业

复习今天的内容,在巩固下代码。思考下为什么会出现这个问题。

import torch

import torch.nn as nn

import torch.optim as optim

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

import numpy as np# 仍然用4特征,3分类的鸢尾花数据集作为我们今天的数据集

# 加载鸢尾花数据集

iris = load_iris()

X = iris.data # 特征数据

y = iris.target # 标签数据

# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)# # 打印下尺寸

# print(X_train.shape)

# print(y_train.shape)

# print(X_test.shape)

# print(y_test.shape)# 归一化数据,神经网络对于输入数据的尺寸敏感,归一化是最常见的处理方式

from sklearn.preprocessing import MinMaxScaler

scaler = MinMaxScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test) #确保训练集和测试集是相同的缩放# 将数据转换为 PyTorch 张量,因为 PyTorch 使用张量进行训练

# y_train和y_test是整数,所以需要转化为long类型,如果是float32,会输出1.0 0.0

X_train = torch.FloatTensor(X_train)

y_train = torch.LongTensor(y_train)

X_test = torch.FloatTensor(X_test)

y_test = torch.LongTensor(y_test)class MLP(nn.Module): # 定义一个多层感知机(MLP)模型,继承父类nn.Moduledef __init__(self): # 初始化函数super(MLP, self).__init__() # 调用父类的初始化函数# 前三行是八股文,后面的是自定义的self.fc1 = nn.Linear(4, 10) # 输入层到隐藏层self.relu = nn.ReLU()self.fc2 = nn.Linear(10, 3) # 隐藏层到输出层

# 输出层不需要激活函数,因为后面会用到交叉熵函数cross_entropy,交叉熵函数内部有softmax函数,会把输出转化为概率def forward(self, x):out = self.fc1(x)out = self.relu(out)out = self.fc2(out)return out# 实例化模型

model = MLP()# 分类问题使用交叉熵损失函数

criterion = nn.CrossEntropyLoss()# 使用随机梯度下降优化器

optimizer = optim.SGD(model.parameters(), lr=0.01)# # 使用自适应学习率的化器

# optimizer = optim.Adam(model.parameters(), lr=0.001)# 训练模型

num_epochs = 20000 # 训练的轮数# 用于存储每个 epoch 的损失值

losses = []import time

start_time = time.time() # 记录开始时间for epoch in range(num_epochs): # range是从0开始,所以epoch是从0开始# 前向传播outputs = model.forward(X_train) # 显式调用forward函数# outputs = model(X_train) # 常见写法隐式调用forward函数,其实是用了model类的__call__方法loss = criterion(outputs, y_train) # output是模型预测值,y_train是真实标签# 反向传播和优化optimizer.zero_grad() #梯度清零,因为PyTorch会累积梯度,所以每次迭代需要清零,梯度累计是那种小的bitchsize模拟大的bitchsizeloss.backward() # 反向传播计算梯度optimizer.step() # 更新参数# 记录损失值losses.append(loss.item())# 打印训练信息if (epoch + 1) % 100 == 0: # range是从0开始,所以epoch+1是从当前epoch开始,每100个epoch打印一次print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {loss.item():.4f}')time_all = time.time() - start_time # 计算训练时间

print(f'Training time: {time_all:.2f} seconds')

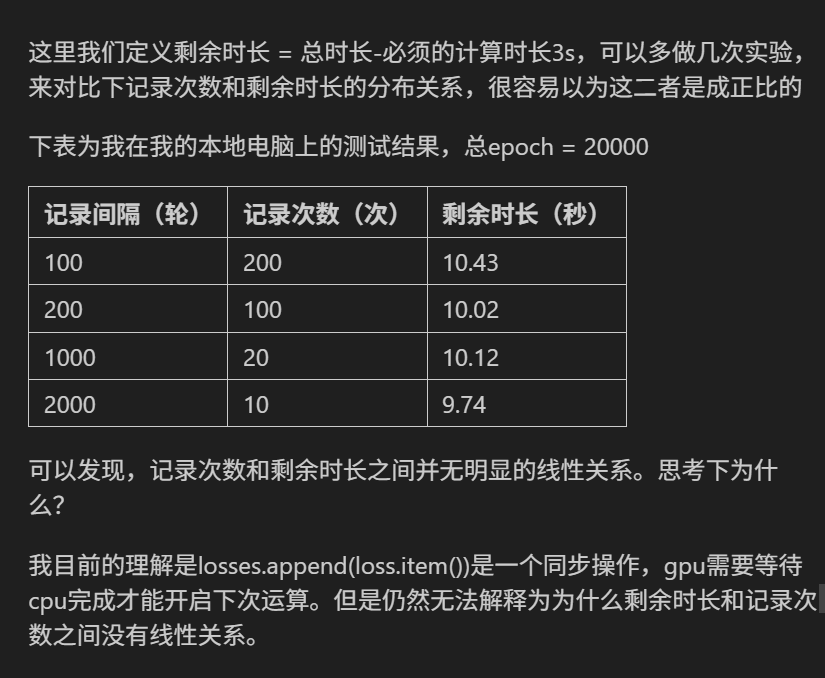

import matplotlib.pyplot as plt

# 可视化损失曲线

plt.plot(range(num_epochs), losses)

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.title('Training Loss over Epochs')

plt.show()Epoch [100/20000], Loss: 1.0778

Epoch [200/20000], Loss: 1.0504

Epoch [300/20000], Loss: 1.0184

Epoch [400/20000], Loss: 0.9754

Epoch [500/20000], Loss: 0.9234

Epoch [600/20000], Loss: 0.8646

Epoch [700/20000], Loss: 0.8025

Epoch [800/20000], Loss: 0.7415

Epoch [900/20000], Loss: 0.6859

Epoch [1000/20000], Loss: 0.6378

Epoch [1100/20000], Loss: 0.5975

Epoch [1200/20000], Loss: 0.5639

Epoch [1300/20000], Loss: 0.5355

Epoch [1400/20000], Loss: 0.5112

Epoch [1500/20000], Loss: 0.4899

Epoch [1600/20000], Loss: 0.4707

Epoch [1700/20000], Loss: 0.4530

Epoch [1800/20000], Loss: 0.4366

Epoch [1900/20000], Loss: 0.4212

Epoch [2000/20000], Loss: 0.4065

Epoch [2100/20000], Loss: 0.3924

Epoch [2200/20000], Loss: 0.3789

Epoch [2300/20000], Loss: 0.3659

Epoch [2400/20000], Loss: 0.3534

Epoch [2500/20000], Loss: 0.3414

...

Epoch [19800/20000], Loss: 0.0615

Epoch [19900/20000], Loss: 0.0614

Epoch [20000/20000], Loss: 0.0612

Training time: 6.18 seconds

import wmic = wmi.WMI()

processors = c.Win32_Processor()for processor in processors:print(f"CPU 型号: {processor.Name}")print(f"核心数: {processor.NumberOfCores}")print(f"线程数: {processor.NumberOfLogicalProcessors}")CPU 型号: 12th Gen Intel(R) Core(TM) i5-12490F

核心数: 6

线程数: 12import torch# 检查CUDA是否可用

if torch.cuda.is_available():print("CUDA可用!")# 获取可用的CUDA设备数量device_count = torch.cuda.device_count()print(f"可用的CUDA设备数量: {device_count}")# 获取当前使用的CUDA设备索引current_device = torch.cuda.current_device()print(f"当前使用的CUDA设备索引: {current_device}")# 获取当前CUDA设备的名称device_name = torch.cuda.get_device_name(current_device)print(f"当前CUDA设备的名称: {device_name}")# 获取CUDA版本cuda_version = torch.version.cudaprint(f"CUDA版本: {cuda_version}")# 查看cuDNN版本(如果可用)print("cuDNN版本:", torch.backends.cudnn.version())else: print("CUDA不可用。")CUDA可用!

可用的CUDA设备数量: 1

当前使用的CUDA设备索引: 0

当前CUDA设备的名称: NVIDIA GeForce RTX 4060

CUDA版本: 12.1

cuDNN版本: 90100

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(f"使用设备: {device}")# 加载鸢尾花数据集

iris = load_iris()

X = iris.data # 特征数据

y = iris.target # 标签数据# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)# 归一化数据

from sklearn.preprocessing import MinMaxScaler

scaler = MinMaxScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test)# 将数据转换为PyTorch张量并移至GPU

# 分类问题交叉熵损失要求标签为long类型

# 张量具有to(device)方法,可以将张量移动到指定的设备上

X_train = torch.FloatTensor(X_train).to(device)

y_train = torch.LongTensor(y_train).to(device)

X_test = torch.FloatTensor(X_test).to(device)

y_test = torch.LongTensor(y_test).to(device)

# 定义损失函数和优化器

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01)# 训练模型

num_epochs = 20000

losses = []

start_time = time.time()for epoch in range(num_epochs):# 前向传播outputs = model(X_train)loss = criterion(outputs, y_train)# 反向传播和优化optimizer.zero_grad()loss.backward()optimizer.step()# 记录损失值losses.append(loss.item())# 打印训练信息if (epoch + 1) % 100 == 0:print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {loss.item():.4f}')time_all = time.time() - start_time

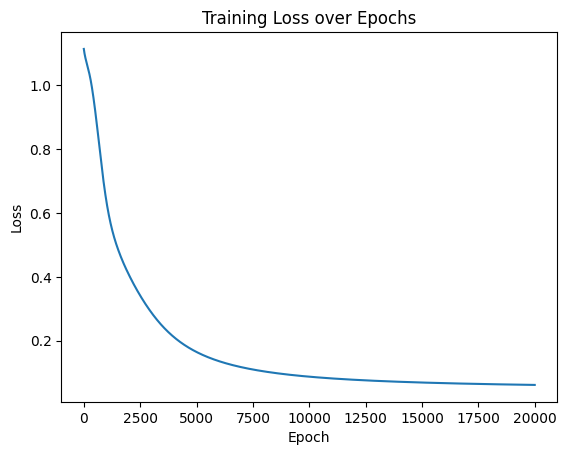

print(f'Training time: {time_all:.2f} seconds')# 可视化损失曲线

plt.plot(range(num_epochs), losses)

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.title('Training Loss over Epochs')

plt.show()Epoch [100/20000], Loss: 1.0682

Epoch [200/20000], Loss: 0.9958

Epoch [300/20000], Loss: 0.9250

Epoch [400/20000], Loss: 0.8523

Epoch [500/20000], Loss: 0.7798

Epoch [600/20000], Loss: 0.7127

Epoch [700/20000], Loss: 0.6547

Epoch [800/20000], Loss: 0.6064

Epoch [900/20000], Loss: 0.5668

Epoch [1000/20000], Loss: 0.5343

Epoch [1100/20000], Loss: 0.5074

Epoch [1200/20000], Loss: 0.4844

Epoch [1300/20000], Loss: 0.4645

Epoch [1400/20000], Loss: 0.4469

Epoch [1500/20000], Loss: 0.4310

Epoch [1600/20000], Loss: 0.4164

Epoch [1700/20000], Loss: 0.4029

Epoch [1800/20000], Loss: 0.3903

Epoch [1900/20000], Loss: 0.3784

Epoch [2000/20000], Loss: 0.3671

Epoch [2100/20000], Loss: 0.3563

Epoch [2200/20000], Loss: 0.3458

Epoch [2300/20000], Loss: 0.3357

Epoch [2400/20000], Loss: 0.3260

Epoch [2500/20000], Loss: 0.3165

...

Epoch [19800/20000], Loss: 0.0623

Epoch [19900/20000], Loss: 0.0622

Epoch [20000/20000], Loss: 0.0621

Training time: 15.44 seconds

# 知道了哪里耗时,针对性优化一下

import torch

import torch.nn as nn

import torch.optim as optim

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

import numpy as np# 仍然用4特征,3分类的鸢尾花数据集作为我们今天的数据集

# 加载鸢尾花数据集

iris = load_iris()

X = iris.data # 特征数据

y = iris.target # 标签数据

# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)# # 打印下尺寸

# print(X_train.shape)

# print(y_train.shape)

# print(X_test.shape)

# print(y_test.shape)# 归一化数据,神经网络对于输入数据的尺寸敏感,归一化是最常见的处理方式

from sklearn.preprocessing import MinMaxScaler

scaler = MinMaxScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test) #确保训练集和测试集是相同的缩放# 将数据转换为 PyTorch 张量,因为 PyTorch 使用张量进行训练

# y_train和y_test是整数,所以需要转化为long类型,如果是float32,会输出1.0 0.0

X_train = torch.FloatTensor(X_train)

y_train = torch.LongTensor(y_train)

X_test = torch.FloatTensor(X_test)

y_test = torch.LongTensor(y_test)class MLP(nn.Module): # 定义一个多层感知机(MLP)模型,继承父类nn.Moduledef __init__(self): # 初始化函数super(MLP, self).__init__() # 调用父类的初始化函数# 前三行是八股文,后面的是自定义的self.fc1 = nn.Linear(4, 10) # 输入层到隐藏层self.relu = nn.ReLU()self.fc2 = nn.Linear(10, 3) # 隐藏层到输出层

# 输出层不需要激活函数,因为后面会用到交叉熵函数cross_entropy,交叉熵函数内部有softmax函数,会把输出转化为概率def forward(self, x):out = self.fc1(x)out = self.relu(out)out = self.fc2(out)return out# 实例化模型

model = MLP()# 分类问题使用交叉熵损失函数

criterion = nn.CrossEntropyLoss()# 使用随机梯度下降优化器

optimizer = optim.SGD(model.parameters(), lr=0.01)# # 使用自适应学习率的化器

# optimizer = optim.Adam(model.parameters(), lr=0.001)# 训练模型

num_epochs = 20000 # 训练的轮数# 用于存储每个 epoch 的损失值

losses = []import time

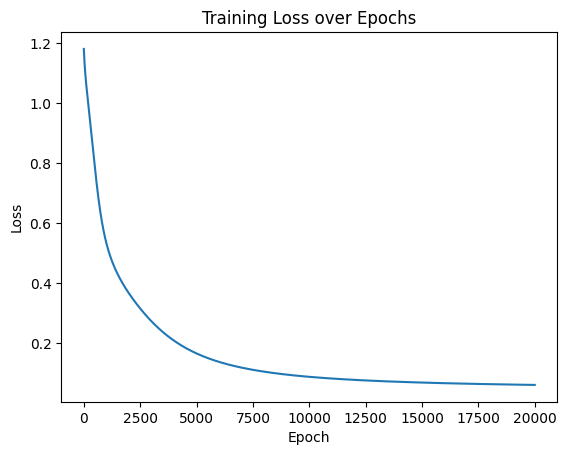

start_time = time.time() # 记录开始时间for epoch in range(num_epochs): # range是从0开始,所以epoch是从0开始# 前向传播outputs = model.forward(X_train) # 显式调用forward函数# outputs = model(X_train) # 常见写法隐式调用forward函数,其实是用了model类的__call__方法loss = criterion(outputs, y_train) # output是模型预测值,y_train是真实标签# 反向传播和优化optimizer.zero_grad() #梯度清零,因为PyTorch会累积梯度,所以每次迭代需要清零,梯度累计是那种小的bitchsize模拟大的bitchsizeloss.backward() # 反向传播计算梯度optimizer.step() # 更新参数# 记录损失值# losses.append(loss.item())# 打印训练信息if (epoch + 1) % 100 == 0: # range是从0开始,所以epoch+1是从当前epoch开始,每100个epoch打印一次print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {loss.item():.4f}')time_all = time.time() - start_time # 计算训练时间

print(f'Training time: {time_all:.2f} seconds')Epoch [100/20000], Loss: 1.1129

Epoch [200/20000], Loss: 1.0969

Epoch [300/20000], Loss: 1.0826

Epoch [400/20000], Loss: 1.0661

Epoch [500/20000], Loss: 1.0449

Epoch [600/20000], Loss: 1.0170

Epoch [700/20000], Loss: 0.9807

Epoch [800/20000], Loss: 0.9347

Epoch [900/20000], Loss: 0.8819

Epoch [1000/20000], Loss: 0.8254

Epoch [1100/20000], Loss: 0.7680

Epoch [1200/20000], Loss: 0.7132

Epoch [1300/20000], Loss: 0.6635

Epoch [1400/20000], Loss: 0.6202

Epoch [1500/20000], Loss: 0.5832

Epoch [1600/20000], Loss: 0.5517

Epoch [1700/20000], Loss: 0.5246

Epoch [1800/20000], Loss: 0.5011

Epoch [1900/20000], Loss: 0.4801

Epoch [2000/20000], Loss: 0.4611

Epoch [2100/20000], Loss: 0.4437

Epoch [2200/20000], Loss: 0.4275

Epoch [2300/20000], Loss: 0.4121

Epoch [2400/20000], Loss: 0.3975

Epoch [2500/20000], Loss: 0.3836

...

Epoch [19800/20000], Loss: 0.0610

Epoch [19900/20000], Loss: 0.0609

Epoch [20000/20000], Loss: 0.0608

Training time: 5.99 seconds

import torch

import torch.nn as nn

import torch.optim as optim

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import MinMaxScaler

import time

import matplotlib.pyplot as plt# 设置GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print(f"使用设备: {device}")# 加载鸢尾花数据集

iris = load_iris()

X = iris.data # 特征数据

y = iris.target # 标签数据# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)# 归一化数据

scaler = MinMaxScaler()

X_train = scaler.fit_transform(X_train)

X_test = scaler.transform(X_test)# 将数据转换为PyTorch张量并移至GPU

X_train = torch.FloatTensor(X_train).to(device)

y_train = torch.LongTensor(y_train).to(device)

X_test = torch.FloatTensor(X_test).to(device)

y_test = torch.LongTensor(y_test).to(device)class MLP(nn.Module):def __init__(self):super(MLP, self).__init__()self.fc1 = nn.Linear(4, 10) # 输入层到隐藏层self.relu = nn.ReLU()self.fc2 = nn.Linear(10, 3) # 隐藏层到输出层def forward(self, x):out = self.fc1(x)out = self.relu(out)out = self.fc2(out)return out# 实例化模型并移至GPU

model = MLP().to(device)# 分类问题使用交叉熵损失函数

criterion = nn.CrossEntropyLoss()# 使用随机梯度下降优化器

optimizer = optim.SGD(model.parameters(), lr=0.01)# 训练模型

num_epochs = 20000 # 训练的轮数# 用于存储每100个epoch的损失值和对应的epoch数

losses = []start_time = time.time() # 记录开始时间for epoch in range(num_epochs):# 前向传播outputs = model(X_train) # 隐式调用forward函数loss = criterion(outputs, y_train)# 反向传播和优化optimizer.zero_grad()loss.backward()optimizer.step()# 记录损失值if (epoch + 1) % 200 == 0:losses.append(loss.item()) # item()方法返回一个Python数值,loss是一个标量张量print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {loss.item():.4f}')# 打印训练信息if (epoch + 1) % 2000 == 0: # range是从0开始,所以epoch+1是从当前epoch开始,每100个epoch打印一次print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {loss.item():.4f}')time_all = time.time() - start_time # 计算训练时间

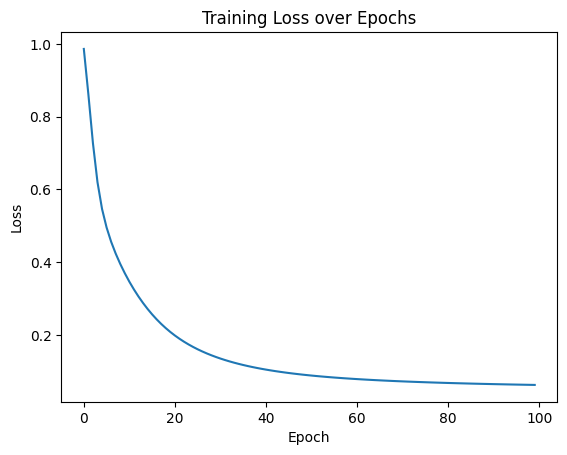

print(f'Training time: {time_all:.2f} seconds')# 可视化损失曲线

plt.plot(range(len(losses)), losses)

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.title('Training Loss over Epochs')

plt.show()使用设备: cuda:0

Epoch [200/20000], Loss: 0.9866

Epoch [400/20000], Loss: 0.8616

Epoch [600/20000], Loss: 0.7275

Epoch [800/20000], Loss: 0.6199

Epoch [1000/20000], Loss: 0.5462

Epoch [1200/20000], Loss: 0.4952

Epoch [1400/20000], Loss: 0.4560

Epoch [1600/20000], Loss: 0.4234

Epoch [1800/20000], Loss: 0.3949

Epoch [2000/20000], Loss: 0.3694

Epoch [2000/20000], Loss: 0.3694

Epoch [2200/20000], Loss: 0.3460

Epoch [2400/20000], Loss: 0.3248

Epoch [2600/20000], Loss: 0.3054

Epoch [2800/20000], Loss: 0.2875

Epoch [3000/20000], Loss: 0.2711

Epoch [3200/20000], Loss: 0.2561

Epoch [3400/20000], Loss: 0.2424

Epoch [3600/20000], Loss: 0.2298

Epoch [3800/20000], Loss: 0.2183

Epoch [4000/20000], Loss: 0.2077

Epoch [4000/20000], Loss: 0.2077

Epoch [4200/20000], Loss: 0.1980

Epoch [4400/20000], Loss: 0.1891

...

Epoch [19800/20000], Loss: 0.0623

Epoch [20000/20000], Loss: 0.0621

Epoch [20000/20000], Loss: 0.0621

Training time: 13.54 seconds @浙大疏锦行

@浙大疏锦行