多卡解决报错torch.distributed.elastic.multiprocessing.errors.ChildFailedError的问题

使用多卡运行 Pytorch出现下面的报错:

E0619 10:29:15.774000 5065 site-packages/torch/distributed/elastic/multiprocessing/api.py:874] failed (exitcode: -11) local_rank: 0 (pid: 5184) of binary: /root/miniconda3/bin/python

Traceback (most recent call last):

File “/root/miniconda3/bin/torchrun”, line 8, in

sys.exit(main())

File “/root/miniconda3/lib/python3.10/site-packages/torch/distributed/elastic/multiprocessing/errors/init.py”, line 355, in wrapper

return f(*args, **kwargs)

File “/root/miniconda3/lib/python3.10/site-packages/torch/distributed/run.py”, line 892, in main

run(args)

File “/root/miniconda3/lib/python3.10/site-packages/torch/distributed/run.py”, line 883, in run

elastic_launch(

File “/root/miniconda3/lib/python3.10/site-packages/torch/distributed/launcher/api.py”, line 139, in call

return launch_agent(self._config, self._entrypoint, list(args))

File “/root/miniconda3/lib/python3.10/site-packages/torch/distributed/launcher/api.py”, line 270, in launch_agent

raise ChildFailedError(

torch.distributed.elastic.multiprocessing.errors.ChildFailedError:

/root/autodl-tmp/LLaMA-Factory/src/llamafactory/launcher.py FAILED

Failures:

<NO_OTHER_FAILURES>

Root Cause (first observed failure):

[0]:

time : 2025-06-19_10:29:15

host : autodl-container-f5de4b862a-e994ae7c

rank : 0 (local_rank: 0)

exitcode : -11 (pid: 5184)

error_file: <N/A>

traceback : Signal 11 (SIGSEGV) received by PID 5184

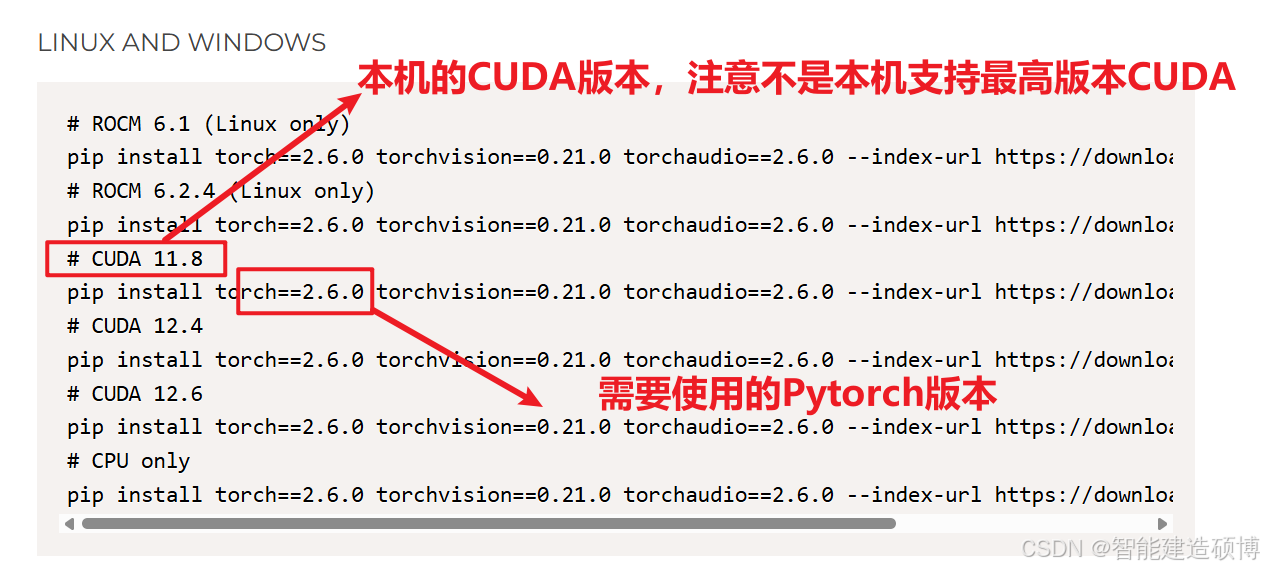

解决办法,安装与CUDA版本对应的Pytorch

https://pytorch.org/get-started/previous-versions/

注意:本机使用的CUDA版本可以使用 下面的代码查看

import torch # 如果pytorch安装成功即可导入

print(torch.cuda.is_available()) # 查看CUDA是否可用,如果True表示可以使用

print(torch.cuda.device_count()) # 查看可用的CUDA数量,0表示有一个

print(torch.version.cuda) # 查看CUDA的版本号

复制完整的命令进行安装即可