经典的卷积神经网络模型 - ResNet

经典的卷积神经网络模型 - ResNet

flyfish

2015年,何恺明(Kaiming He)等人在论文《Deep Residual Learning for Image Recognition》中提出了ResNet(Residual Network,残差网络)。在当时,随着深度神经网络层数的增加,训练变得越来越困难,主要问题是梯度消失和梯度爆炸现象。即使使用各种优化技术和正则化方法,深层网络的表现仍然不如浅层网络。ResNet通过引入残差块(Residual Block)有效解决了这个问题,使得网络层数可以大幅度增加,同时还能显著提升模型的表现。

经典的卷积神经网络模型 - AlexNet

经典的卷积神经网络模型 - VGGNet

卷积层的输出

1x1卷积的作用

2. 残差(Residual)

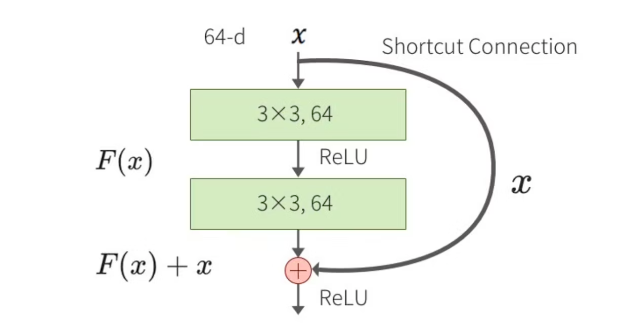

在ResNet中,残差指的是输入值与输出值之间的差值。具体来说,假设输入为 x x x,经过一系列变换后的输出为 F ( x ) F(x) F(x),ResNet引入了一条“快捷连接”(shortcut connection),直接将输入 x x x加入到输出 F ( x ) F(x) F(x),最终的输出为 H ( x ) = F ( x ) + x H(x) = F(x) + x H(x)=F(x)+x。这种结构称为残差块(Residual Block)。

3. ResNet的不同版本

ResNet有多个不同版本,后面的数字表示网络层的数量。具体来说:

- ResNet18: 18层

- ResNet34: 34层

- ResNet50: 50层

- ResNet101: 101层

- ResNet152: 152层

4. 常规残差模块

常规残差模块(Residual Block)包含两个3x3卷积层,每个卷积层后面跟着批归一化(Batch Normalization)和ReLU激活函数。假设输入为 x x x,经过第一层卷积、批归一化和ReLU后的输出为 F 1 ( x ) F_1(x) F1(x),再经过第二层卷积、批归一化后的输出为 F 2 ( F 1 ( x ) ) F_2(F_1(x)) F2(F1(x))。最终的输出是输入 x x x和 F 2 ( F 1 ( x ) ) F_2(F_1(x)) F2(F1(x))的和,即 H ( x ) = F ( x ) + x H(x) = F(x) + x H(x)=F(x)+x。

ResNet-18和ResNet-34使用的是BasicBlock。

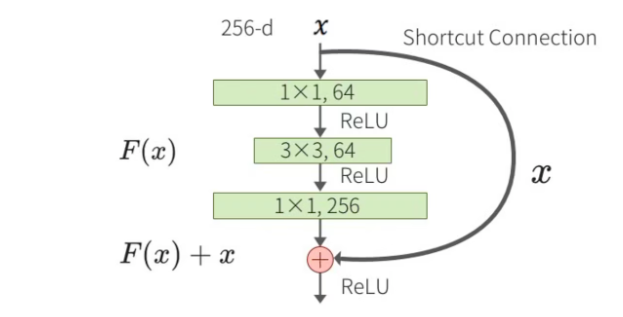

5. 瓶颈残差模块(Bottleneck Residual Block)

瓶颈残差模块用于更深的ResNet版本(如ResNet50及以上),目的是减少计算量和参数量。瓶颈残差模块包含三个卷积层:一个1x1卷积层用于降维,一个3x3卷积层用于特征提取,最后一个1x1卷积层用于升维。假设输入为 x x x,经过1x1卷积降维后的输出为 F 1 ( x ) F_1(x) F1(x),再经过3x3卷积后的输出为 F 2 ( F 1 ( x ) ) F_2(F_1(x)) F2(F1(x)),最后经过1x1卷积升维后的输出为 F 3 ( F 2 ( F 1 ( x ) ) ) F_3(F_2(F_1(x))) F3(F2(F1(x)))。最终的输出是输入 x x x和 F 3 ( F 2 ( F 1 ( x ) ) ) F_3(F_2(F_1(x))) F3(F2(F1(x)))的和,即 H ( x ) = F ( x ) + x H(x) = F(x) + x H(x)=F(x)+x。ResNet-50、ResNet-101和ResNet-152使用的是Bottleneck。

6. 快捷连接(shortcut connection )

快捷连接(shortcut connection),即直接将输入 x x x加到输出 F ( x ) F(x) F(x)上,从而避免了梯度消失和梯度爆炸问题。

import torchvision.models as models

resnet18 = models.resnet18()

print(resnet18)

ResNet((conv1): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(maxpool): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)(layer1): Sequential((0): BasicBlock((conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True))(1): BasicBlock((conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)))(layer2): Sequential((0): BasicBlock((conv1): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(downsample): Sequential((0): Conv2d(64, 128, kernel_size=(1, 1), stride=(2, 2), bias=False)(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)))(1): BasicBlock((conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)))(layer3): Sequential((0): BasicBlock((conv1): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(downsample): Sequential((0): Conv2d(128, 256, kernel_size=(1, 1), stride=(2, 2), bias=False)(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)))(1): BasicBlock((conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)))(layer4): Sequential((0): BasicBlock((conv1): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(downsample): Sequential((0): Conv2d(256, 512, kernel_size=(1, 1), stride=(2, 2), bias=False)(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)))(1): BasicBlock((conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)))(avgpool): AdaptiveAvgPool2d(output_size=(1, 1))(fc): Linear(in_features=512, out_features=1000, bias=True)

)

自定义实现ResNet-18

import torch

import torch.nn as nn

import torch.nn.functional as Fclass BasicBlock(nn.Module):expansion = 1def __init__(self, in_channels, out_channels, stride=1):super(BasicBlock, self).__init__()self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)self.bn1 = nn.BatchNorm2d(out_channels)self.relu = nn.ReLU(inplace=True)self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=False)self.bn2 = nn.BatchNorm2d(out_channels)self.shortcut = nn.Sequential()if stride != 1 or in_channels != self.expansion * out_channels:self.shortcut = nn.Sequential(nn.Conv2d(in_channels, self.expansion * out_channels, kernel_size=1, stride=stride, bias=False),nn.BatchNorm2d(self.expansion * out_channels))def forward(self, x):out = self.relu(self.bn1(self.conv1(x)))out = self.bn2(self.conv2(out))out += self.shortcut(x)out = self.relu(out)return outclass ResNet(nn.Module):def __init__(self, block, num_blocks, num_classes=1000):super(ResNet, self).__init__()self.in_channels = 64self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)self.bn1 = nn.BatchNorm2d(64)self.relu = nn.ReLU(inplace=True)self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)self.layer1 = self._make_layer(block, 64, num_blocks[0], stride=1)self.layer2 = self._make_layer(block, 128, num_blocks[1], stride=2)self.layer3 = self._make_layer(block, 256, num_blocks[2], stride=2)self.layer4 = self._make_layer(block, 512, num_blocks[3], stride=2)self.avgpool = nn.AdaptiveAvgPool2d((1, 1))self.fc = nn.Linear(512 * block.expansion, num_classes)def _make_layer(self, block, out_channels, num_blocks, stride):layers = []layers.append(block(self.in_channels, out_channels, stride))self.in_channels = out_channels * block.expansionfor _ in range(1, num_blocks):layers.append(block(self.in_channels, out_channels))return nn.Sequential(*layers)def forward(self, x):x = self.relu(self.bn1(self.conv1(x)))x = self.maxpool(x)x = self.layer1(x)x = self.layer2(x)x = self.layer3(x)x = self.layer4(x)x = self.avgpool(x)x = torch.flatten(x, 1)x = self.fc(x)return xdef resnet18(num_classes=1000):return ResNet(BasicBlock, [2, 2, 2, 2], num_classes)# Example usage

model = resnet18()

print(model)

自定义实现ResNet-18、ResNet-34、ResNet-50、ResNet-101和ResNet-152

ResNet-18和ResNet-34使用的是BasicBlock,而ResNet-50、ResNet-101和ResNet-152使用的是Bottleneck。

import torch

import torch.nn as nn

import torch.nn.functional as Fclass BasicBlock(nn.Module):expansion = 1def __init__(self, in_channels, out_channels, stride=1):super(BasicBlock, self).__init__()self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)self.bn1 = nn.BatchNorm2d(out_channels)self.relu = nn.ReLU(inplace=True)self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=1, padding=1, bias=False)self.bn2 = nn.BatchNorm2d(out_channels)self.shortcut = nn.Sequential()if stride != 1 or in_channels != self.expansion * out_channels:self.shortcut = nn.Sequential(nn.Conv2d(in_channels, self.expansion * out_channels, kernel_size=1, stride=stride, bias=False),nn.BatchNorm2d(self.expansion * out_channels))def forward(self, x):out = self.relu(self.bn1(self.conv1(x)))out = self.bn2(self.conv2(out))out += self.shortcut(x)out = self.relu(out)return outclass Bottleneck(nn.Module):expansion = 4def __init__(self, in_channels, out_channels, stride=1):super(Bottleneck, self).__init__()self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=1, bias=False)self.bn1 = nn.BatchNorm2d(out_channels)self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)self.bn2 = nn.BatchNorm2d(out_channels)self.conv3 = nn.Conv2d(out_channels, out_channels * self.expansion, kernel_size=1, bias=False)self.bn3 = nn.BatchNorm2d(out_channels * self.expansion)self.relu = nn.ReLU(inplace=True)self.shortcut = nn.Sequential()if stride != 1 or in_channels != out_channels * self.expansion:self.shortcut = nn.Sequential(nn.Conv2d(in_channels, out_channels * self.expansion, kernel_size=1, stride=stride, bias=False),nn.BatchNorm2d(out_channels * self.expansion))def forward(self, x):out = self.relu(self.bn1(self.conv1(x)))out = self.relu(self.bn2(self.conv2(out)))out = self.bn3(self.conv3(out))out += self.shortcut(x)out = self.relu(out)return outclass ResNet(nn.Module):def __init__(self, block, num_blocks, num_classes=1000):super(ResNet, self).__init__()self.in_channels = 64self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3, bias=False)self.bn1 = nn.BatchNorm2d(64)self.relu = nn.ReLU(inplace=True)self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)self.layer1 = self._make_layer(block, 64, num_blocks[0], stride=1)self.layer2 = self._make_layer(block, 128, num_blocks[1], stride=2)self.layer3 = self._make_layer(block, 256, num_blocks[2], stride=2)self.layer4 = self._make_layer(block, 512, num_blocks[3], stride=2)self.avgpool = nn.AdaptiveAvgPool2d((1, 1))self.fc = nn.Linear(512 * block.expansion, num_classes)def _make_layer(self, block, out_channels, num_blocks, stride):layers = []layers.append(block(self.in_channels, out_channels, stride))self.in_channels = out_channels * block.expansionfor _ in range(1, num_blocks):layers.append(block(self.in_channels, out_channels))return nn.Sequential(*layers)def forward(self, x):x = self.relu(self.bn1(self.conv1(x)))x = self.maxpool(x)x = self.layer1(x)x = self.layer2(x)x = self.layer3(x)x = self.layer4(x)x = self.avgpool(x)x = torch.flatten(x, 1)x = self.fc(x)return xdef resnet18(num_classes=1000):return ResNet(BasicBlock, [2, 2, 2, 2], num_classes)def resnet34(num_classes=1000):return ResNet(BasicBlock, [3, 4, 6, 3], num_classes)def resnet50(num_classes=1000):return ResNet(Bottleneck, [3, 4, 6, 3], num_classes)def resnet101(num_classes=1000):return ResNet(Bottleneck, [3, 4, 23, 3], num_classes)def resnet152(num_classes=1000):return ResNet(Bottleneck, [3, 8, 36, 3], num_classes)# Example usage

model_18 = resnet18()

model_34 = resnet34()

model_50 = resnet50()

model_101 = resnet101()

model_152 = resnet152()print(model_18)

print(model_34)

print(model_50)

print(model_101)

print(model_152)

网络结构

以ResNet18和ResNet50的结构举例

因为ResNet-18和ResNet-34使用的是BasicBlock,ResNet-50、ResNet-101和ResNet-152使用的是Bottleneck,可以区分看。

ResNet18

-

输入:224x224图像

-

卷积层:7x7卷积,64个过滤器,步长2

-

最大池化层:3x3,步长2

-

残差模块:

-

2个Basic Block,每个包含2个3x3卷积层(64个过滤器)

-

2个Basic Block,每个包含2个3x3卷积层(128个过滤器)

-

2个Basic Block,每个包含2个3x3卷积层(256个过滤器)

-

2个Basic Block,每个包含2个3x3卷积层(512个过滤器)

-

-

全局平均池化层

-

全连接层:1000个单元(对应ImageNet的1000个类别)

用参数表示就是 [2, 2, 2, 2]

ResNet50

-

输入:224x224图像

-

卷积层:7x7卷积,64个过滤器,步长2

-

最大池化层:3x3,步长2

-

残差模块:

-

3个Bottleneck Block,每个包含1x1降维、3x3卷积、1x1升维(256个过滤器)

-

4个Bottleneck Block,每个包含1x1降维、3x3卷积、1x1升维(512个过滤器)

-

6个Bottleneck Block,每个包含1x1降维、3x3卷积、1x1升维(1024个过滤器)

-

3个Bottleneck Block,每个包含1x1降维、3x3卷积、1x1升维(2048个过滤器)

-

-

全局平均池化层

-

全连接层:1000个单元(对应ImageNet的1000个类别)

用参数表示就是 [3, 4, 6, 3]

列表参数表示每个阶段(layer)中包含的残差块(residual block)的数量。ResNet的网络结构通常分为多个阶段,每个阶段包含多个残差块。这些残差块可以是常规的(BasicBlock)或瓶颈的(Bottleneck)。具体来说:

[2, 2, 2, 2] 表示第1个阶段有2个残差块,第2个阶段有2个残差块,第3个阶段有2个残差块,第4个阶段有2个残差块。

[3, 4, 6, 3] 表示第1个阶段有3个残差块,第2个阶段有4个残差块,第3个阶段有6个残差块,第4个阶段有3个残差块。

BasicBlock: 实现了常规残差模块,包含两个3x3的卷积层。用于ResNet-18和ResNet-34。

Bottleneck: 实现了瓶颈残差模块,包含一个1x1卷积层、一个3x3卷积层和另一个1x1卷积层。用于ResNet-50、ResNet-101和ResNet-152。

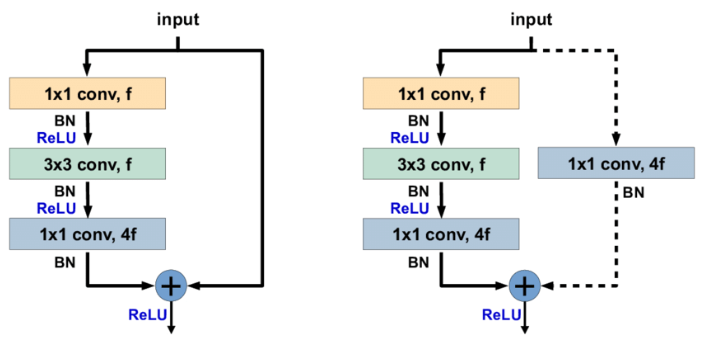

identity shortcut和projection shortcut

import torchvision.models as models

model = models.resnet50()

print(model)

完整内容自行打印看,这里主要说明 identity shortcut和projection shortcut

ResNet((conv1): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(maxpool): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)(layer1): Sequential((0): Bottleneck((conv1): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv3): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn3): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(downsample): Sequential((0): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)))(1): Bottleneck((conv1): Conv2d(256, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv3): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn3): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True))(2): Bottleneck((conv1): Conv2d(256, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv3): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn3): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)))......

在 ResNet 中,identity shortcut 和 projection shortcut 主要出现在 Bottleneck 模块中。

- Identity Shortcut : 这是直接跳过层的快捷方式,输入直接添加到输出。通常在输入和输出维度相同时使用。在模型输出中可以看到,如

layer1的第1和第2个Bottleneck:

(1): Bottleneck((conv1): Conv2d(256, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv3): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn3): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)

)

可以看到这里没有 downsample 层,所以输入和输出直接相加。

- Projection Shortcut : 这是使用卷积层调整维度的快捷方式,用于当输入和输出维度不同时。在模型输出中可以看到,如

layer1的第0个Bottleneck:

(0): Bottleneck((conv1): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv3): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(bn3): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(relu): ReLU(inplace=True)(downsample): Sequential((0): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True))

)

这里有一个 downsample 层,通过卷积和批量归一化调整输入的维度以匹配输出。

-

Identity Shortcut : 左侧图,没有

downsample层。如果要写上downsample也是(downsample): Sequential()括号里是空的 -

Projection Shortcut :右侧图 有

downsample层,用于调整维度。 比如

(downsample): Sequential((0): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True))

Bottleneck 结构中,f 通常表示瓶颈层的过滤器(或通道)数。

在 Bottleneck 模块中,通常有三层卷积:

第一个 1x1 卷积,用于降低维度,通道数是 f。

第二个 3x3 卷积,用于在降低维度的情况下进行卷积操作,通道数也是 f。

第三个 1x1 卷积,用于恢复维度,通道数是 4f。

如果要保证输出的特征图大小是固定的(如 1x1),自适应平均池化或者全局平均池化是最常用的选择;如果要调整通道数并保持空间结构,则可以用 1x1 卷积和池化的组合。

无论输入的特征图大小是多少,自适应平均池化都可以将其调整到一个指定的输出大小。在 ResNet 中使用的 AdaptiveAvgPool2d(output_size=(1, 1)) 会将输入的特征图调整到大小为 1x1。通过将特征图大小固定,可以更容易地设计网络结构,尤其是全连接层的输入部分。例如,将特征图调整到 1x1 后,后面的全连接层只需要处理固定数量的特征,不用考虑输入图像的大小变化。在特征图被调整到较小的大小(例如 1x1)后,随后的全连接层所需的参数和计算量会显著减少。