PyTorch学习笔记(十六)——利用GPU训练

一、方式一

网络模型、损失函数、数据(包括输入、标注)

找到以上三种变量,调用它们的.cuda(),再返回即可

if torch.cuda.is_available():mynn = mynn.cuda()if torch.cuda.is_available():loss_function = loss_function.cuda()for data in train_dataloader:imgs,targets = dataif torch.cuda.is_available():imgs = imgs.cuda()targets = targets.cuda()for data in test_dataloader:imgs,targets = dataif torch.cuda.is_available():imgs = imgs.cuda()targets = targets.cuda()完整代码:

import torch

import torchvision

from torch import nn

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

import time

# from model import *# 准备数据集

train_data = torchvision.datasets.CIFAR10(root="../datasets",train=True,transform=torchvision.transforms.ToTensor(),download=False)

test_data = torchvision.datasets.CIFAR10(root="../datasets",train=False,transform=torchvision.transforms.ToTensor(),download=False)train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(test_data_size))# 利用dataloader来加载数据集

train_dataloader = DataLoader(train_data,batch_size=64)

test_dataloader = DataLoader(test_data,batch_size=64)# 创建网络模型

class MyNN(nn.Module):def __init__(self):super(MyNN, self).__init__()self.model = nn.Sequential(nn.Conv2d(3, 32, 5, 1, 2),nn.MaxPool2d(2),nn.Conv2d(32, 32, 5, 1, 2),nn.MaxPool2d(2),nn.Conv2d(32, 64, 5, 1, 2),nn.MaxPool2d(2),nn.Flatten(),nn.Linear(64 * 4 * 4, 64),nn.Linear(64, 10))def forward(self, x):x = self.model(x)return x

mynn = MyNN()

if torch.cuda.is_available():mynn = mynn.cuda()# 损失函数

loss_function = nn.CrossEntropyLoss()

if torch.cuda.is_available():loss_function = loss_function.cuda()

# 优化器

learning_rate = 1e-2

optimizer = torch.optim.SGD(mynn.parameters(), lr=learning_rate)# 设置训练网络的一些参数

total_train_step = 0 # 记录训练次数

total_test_step = 0 # 记录测试次数

epoch = 10 # 训练的轮数# 添加tensorboard

writer = SummaryWriter("../logs_train")start_time = time.time()

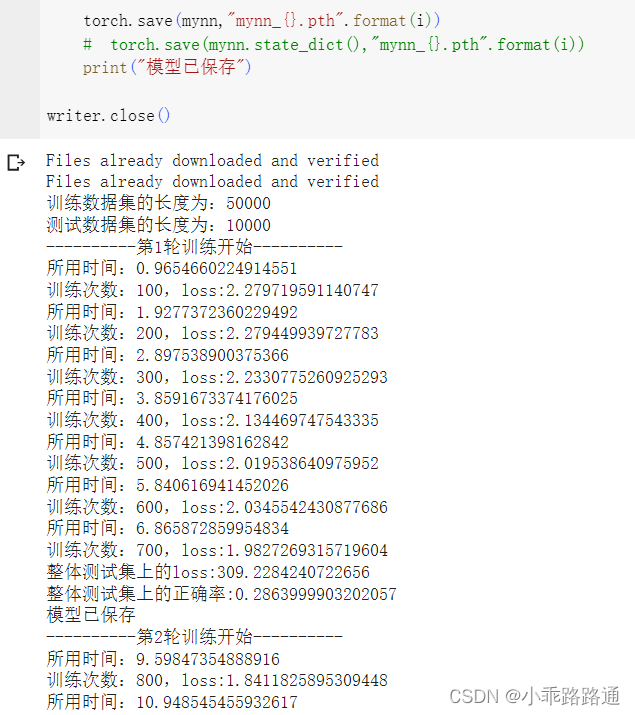

for i in range(epoch):print("----------第{}轮训练开始----------".format(i+1))# 训练步骤开始mynn.train()for data in train_dataloader:imgs,targets = dataif torch.cuda.is_available():imgs = imgs.cuda()targets = targets.cuda()outputs = mynn(imgs)loss = loss_function(outputs, targets)# 优化器优化模型optimizer.zero_grad()loss.backward()optimizer.step()total_train_step += 1if total_train_step % 100 == 0:end_time = time.time()print("所用时间:{}".format(end_time - start_time))print("训练次数:{},loss:{}".format(total_train_step, loss.item()))writer.add_scalar("train_loss",loss.item(),total_train_step)# 测试步骤开始mynn.eval()total_test_loss = 0total_accuracy = 0with torch.no_grad():for data in test_dataloader:imgs,targets = dataif torch.cuda.is_available():imgs = imgs.cuda()targets = targets.cuda()outputs = mynn(imgs)loss = loss_function(outputs, targets)total_test_loss += lossaccuracy = (outputs.argmax(1) == targets).sum()total_accuracy += accuracyprint("整体测试集上的loss:{}".format(total_test_loss))print("整体测试集上的正确率:{}".format(total_accuracy/test_data_size))writer.add_scalar("test_loss",total_test_loss,total_test_step)writer.add_scalar("test_accuracy",total_accuracy/test_data_size,total_test_step)total_test_step += 1torch.save(mynn,"mynn_{}.pth".format(i))# torch.save(mynn.state_dict(),"mynn_{}.pth".format(i))print("模型已保存")writer.close()比较CPU和GPU的训练时间:

查看GPU信息:

在 终端里输入nvidia-smi

使用Google Colab:Google 为我们提供了一个免费的GPU

修改 ——> 笔记本设置 ——> 硬件加速器选择GPU(每周免费使用30h)

二、方式二(更常用)

定义训练设备

device = torch.device("cpu")# 对于单显卡来说,以下两种方式没有区别

device = torch.device("cuda")

device = torch.device("cuda:0")# 一种语法的简写,程序在 CPU 或 GPU/cuda 环境下都能运行

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")网络模型、损失函数、数据(包括输入、标注)

找到以上三种变量,.to(device),再返回即可

mynn = MyNN()

mynn = mynn.to(device)

# 这里可以不用再赋值给mynn,直接mynn.to(device) 也可以loss_function = nn.CrossEntropyLoss()

loss_function = loss_function.to(device)

# 这里可以不用再赋值给loss_function ,直接loss_function .to(device) 也可以for data in train_dataloader:imgs,targets = dataimgs = imgs.to(device)targets = targets.to(device)# 这里必须赋值for data in test_dataloader:imgs,targets = dataimgs = imgs.to(device)targets = imgs.to(device)# 这里必须赋值完整代码:

import torch

import torchvision

from torch import nn

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

import time

# from model import *# 定义训练的设备

# device = torch.device("cpu")

# device = torch.device("cuda")

# device = torch.device("cuda:0")

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")# 准备数据集

train_data = torchvision.datasets.CIFAR10(root="../datasets",train=True,transform=torchvision.transforms.ToTensor(),download=False)

test_data = torchvision.datasets.CIFAR10(root="../datasets",train=False,transform=torchvision.transforms.ToTensor(),download=False)train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size))

print("测试数据集的长度为:{}".format(test_data_size))# 利用dataloader来加载数据集

train_dataloader = DataLoader(train_data,batch_size=64)

test_dataloader = DataLoader(test_data,batch_size=64)# 创建网络模型

class MyNN(nn.Module):def __init__(self):super(MyNN, self).__init__()self.model = nn.Sequential(nn.Conv2d(3, 32, 5, 1, 2),nn.MaxPool2d(2),nn.Conv2d(32, 32, 5, 1, 2),nn.MaxPool2d(2),nn.Conv2d(32, 64, 5, 1, 2),nn.MaxPool2d(2),nn.Flatten(),nn.Linear(64 * 4 * 4, 64),nn.Linear(64, 10))def forward(self, x):x = self.model(x)return x

mynn = MyNN()

mynn.to(device)# 损失函数

loss_function = nn.CrossEntropyLoss()

loss_function.to(device)

# 优化器

learning_rate = 1e-2

optimizer = torch.optim.SGD(mynn.parameters(), lr=learning_rate)# 设置训练网络的一些参数

total_train_step = 0 # 记录训练次数

total_test_step = 0 # 记录测试次数

epoch = 10 # 训练的轮数# 添加tensorboard

writer = SummaryWriter("../logs_train")start_time = time.time()

for i in range(epoch):print("----------第{}轮训练开始----------".format(i+1))# 训练步骤开始mynn.train()for data in train_dataloader:imgs,targets = dataimgs = imgs.to(device)targets = targets.to(device)outputs = mynn(imgs)loss = loss_function(outputs, targets)# 优化器优化模型optimizer.zero_grad()loss.backward()optimizer.step()total_train_step += 1if total_train_step % 100 == 0:end_time = time.time()print("所用时间:{}".format(end_time - start_time))print("训练次数:{},loss:{}".format(total_train_step, loss.item()))writer.add_scalar("train_loss",loss.item(),total_train_step)# 测试步骤开始mynn.eval()total_test_loss = 0total_accuracy = 0with torch.no_grad():for data in test_dataloader:imgs,targets = dataimgs = imgs.to(device)targets = targets.to(device)outputs = mynn(imgs)loss = loss_function(outputs, targets)total_test_loss += lossaccuracy = (outputs.argmax(1) == targets).sum()total_accuracy += accuracyprint("整体测试集上的loss:{}".format(total_test_loss))print("整体测试集上的正确率:{}".format(total_accuracy/test_data_size))writer.add_scalar("test_loss",total_test_loss,total_test_step)writer.add_scalar("test_accuracy",total_accuracy/test_data_size,total_test_step)total_test_step += 1torch.save(mynn,"mynn_{}.pth".format(i))# torch.save(mynn.state_dict(),"mynn_{}.pth".format(i))print("模型已保存")writer.close()