ElasticStack技术之logstash介绍

一、什么是Logstash

Logstash 是 Elastic Stack(ELK Stack)中的一个开源数据处理管道工具,主要用于收集、解析、过滤和传输数据。它支持多种输入源,如文件、网络、数据库等,能够灵活地对数据进行处理,比如通过过滤器插件进行数据的转换、聚合等操作,并将处理后的数据发送到各种输出目标,如 Elasticsearch、文件、数据库等。

二、Logstash的应用场景

-

日志收集与分析 :Logstash可以从多种来源收集日志数据,如文件、HTTP请求、Syslog、数据库等。收集到的日志数据可以存储到Elasticsearch中,以便进行搜索和分析,帮助运维人员快速定位和解决问题。

-

数据转换与过滤 :它可以对收集到的数据进行转换、过滤和聚合。例如,将JSON格式的数据转换为XML格式,或者过滤掉不需要的字段,还可以从非结构化数据中提取结构化信息,如利用Grok从日志中提取时间戳、IP地址等。

-

数据集成与整合 :Logstash能够将不同来源、不同格式的数据进行统一收集和整合,为数据分析和挖掘提供统一的数据源。比如将来自多个应用系统的日志数据整合在一起,方便进行集中管理和分析。

-

实时监控与告警 :通过Logstash实时收集和分析数据,可以及时发现系统中的异常和故障,触发告警和通知,帮助运维人员快速响应。

前面博文提到过logstash相比于filebeat更加重量级,那么我们也可以理解成filebeat的功能更多。但是我个人觉得日常使用filebeat就足够了。

三、logstash环境部署

1. 下载logstash

1.下载Logstash

wget https://artifacts.elastic.co/downloads/logstash/logstash-7.17.28-amd64.deb2. 安装Logstash

[root@elk93 ~]# ll -h logstash-7.17.28-amd64.deb

-rw-r--r-- 1 root root 359M Mar 13 14:41 logstash-7.17.28-amd64.deb

[root@elk93 ~]#

[root@elk93 ~]# dpkg -i logstash-7.17.28-amd64.deb 3.创建符号链接,将Logstash命令添加到PATH环境变量

[root@elk93 ~]# ln -svf /usr/share/logstash/bin/logstash /usr/local/bin/

'/usr/local/bin/logstash' -> '/usr/share/logstash/bin/logstash'

-s创建软链接文件

-v显示过程

-f如果文件存在则覆盖[root@elk93 ~]#

[root@elk93 ~]# logstash --help四、 使用logstash

1. 基于命令行方式启动实例

基于命令行的方式启动实例,使用-e选项指定配置信息(不推荐)

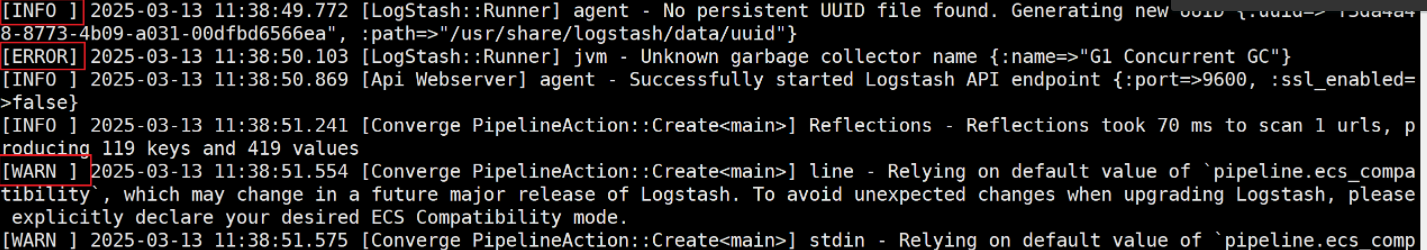

[root@elk93 ~]# logstash -e "input { stdin { type => stdin } } output { stdout { codec => rubydebug } }"

11111111111111111111111111111111111111111111111

{"type" => "stdin","@timestamp" => 2025-03-13T11:39:04.474Z,"message" => "11111111111111111111111111111111111111111111111","@version" => "1","host" => "elk93"

}启动实例会有很多不同程度的报错信息,我们可以指定查看日志程度

我们指定level为warn,不输出info字段了

[root@elk93 ~]# logstash -e "input { stdin { type => stdin } } output { stdout { codec => rubydebug } }" --log.level warn

加参数 --log.level warn

这样在查看就没有info字段信息了

22222222222222222222222222222222222222222

{"@version" => "1","type" => "stdin","host" => "elk93","message" => "22222222222222222222222222222222222222222","@timestamp" => 2025-03-13T11:41:58.326Z

}

2. 基于配置文件启动实例

[root@elk93 ~]# cat /etc/logstash/conf.d/01-stdin-to-stout.conf

input {file {path => "/tmp/student.txt"}

}

output { stdout { codec => rubydebug }

}[root@elk93 ~]# logstash -f /etc/logstash/conf.d/01-stdin-to-stout.conf [root@elk93 ~]# echo 11111111111111111111 >>/tmp/student.txt{"host" => "elk93","@version" => "1","path" => "/tmp/student.txt","message" => "11111111111111111111","@timestamp" => 2025-03-13T11:59:53.464Z

}

tips:

logstash也是按行读取数据,不换行默认也不会收集,也会有位置点记录。

logstash和filebeat可以说操作和工作原理都差不多,file beat更加轻量级

3. Logstash采集文本日志策略

[root@elk93 ~]# cat /usr/share/logstash/data/plugins/inputs/file/.sincedb_782d533684abe27068ac85b78871b9fd

1310786 0 64768 30 1741867261.602881 /tmp/student.txt[root@elk93 ~]# cat /etc/logstash/conf.d/01-stdin-to-stout.conf

input {file {path => "/tmp/student.txt"# 指定首次从哪个位置开始采集,有效值为:beginning,end。默认值为"end"start_position => "beginning"}

}

output { stdout { codec => rubydebug }

}

每次重新加载记录都要从头开始读{"host" => "elk93","@version" => "1","path" => "/tmp/student.txt","message" => "hehe","@timestamp" => 2025-03-13T12:07:33.116Z

}我们也可以删除某个我们不想看到的字段,这里就要用到filter过滤了

[root@elk93 ~]# cat /etc/logstash/conf.d/01-stdin-to-stout.conf

input {file {path => "/tmp/student.txt"# 指定首次从哪个位置开始采集,有效值为:beginning,end。默认值为"end"start_position => "beginning"}

}filter {mutate {remove_field => [ "@version","host" ]}

}output { stdout { codec => rubydebug }

}重新启动logstash

[root@elk93 ~]# rm -f /usr/share/logstash/data/plugins/inputs/file/.sincedb*

[root@elk93 ~]# logstash -f /etc/logstash/conf.d/01-stdin-to-stout.conf

{"path" => "/tmp/student.txt","message" => "11111111111111111111","@timestamp" => 2025-03-13T12:10:26.854Z

}

可以看到version和host字段就没了4.热加载启动配置

修改conf文件立马生效

[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/02-file-to-stdout.conf 5.Logstash多实例案例

跟file beat一样,file beat就是模仿logstash轻量级开发的

启动实例1:

[root@elk93 ~]# logstash -f /etc/logstash/conf.d/01-stdin-to-stdout.conf 启动实例2:

[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/02-file-to-stdout.conf --path.data /tmp/logstash-multiple五、Logstash进阶之logstash的过滤器

- 一个服务器节点可以有多个Logstash实例

- 一个Logstash实例可以有多个pipeline,若没有定义pipeline id,则默认为main pipeline。

- 每个pipeline都有三个组件组成,其中filter插件是可选组件:

- input :

数据从哪里来 。

- filter:

数据经过哪些插件处理,该组件是可选组件。

- output:

数据到哪里去。官网参考:

https://www.elastic.co/guide/en/logstash/7.17/

https://www.elastic.co/guide/en/logstash/7.17/plugins-filters-useragent.html#plugins-filters-useragent-target

1. Logstash采集nginx日志之grok案例

安装nginx

[root@elk93 ~]# apt -y install nginx访问测试

http://10.0.0.93/Logstash采集nginx日志之grok案例

[root@elk93 ~]# vim /etc/logstash/conf.d/02-nginx-grok-to-stout.conf

input { file { path => "/var/log/nginx/access.log"start_position => "beginning"}

} filter {grok {match => { "message" => "%{HTTPD_COMMONLOG}" }}mutate {remove_field => [ "@version","host","path" ]}

}output { stdout { codec => rubydebug }

}[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/02-nginx-grok-to-stout.conf

{"request" => "/","clientip" => "10.0.0.1","timestamp" => "13/Mar/2025:12:18:35 +0000","ident" => "-","verb" => "GET","auth" => "-","response" => "304","bytes" => "0","@timestamp" => 2025-03-13T12:18:40.497Z,"message" => "10.0.0.1 - - [13/Mar/2025:12:18:35 +0000] \"GET / HTTP/1.1\" 304 0 \"-\" \"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/134.0.0.0 Safari/537.36\"","httpversion" => "1.1"

}2. Logstash采集nginx日志之useragent案例

[root@elk93 ~]# cat /etc/logstash/conf.d/03-nginx-useragent-to-stout.conf

input { file { path => "/var/log/nginx/access.log"start_position => "beginning"}

} filter {# 基于正则提取任意文本,并将其封装为一个特定的字段。grok {match => { "message" => "%{HTTPD_COMMONLOG}" }}useragent {source => 'message'# 将解析的结果存储到某个特定字段,若不指定,则默认放在顶级字段。target => "linux96_user_agent"

}mutate {remove_field => [ "@version","host","path" ]}

}output { stdout { codec => rubydebug }

}[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/03-nginx-useragent-to-stout.conf {"verb" => "GET","@timestamp" => 2025-03-13T12:27:22.325Z,"ident" => "-","httpversion" => "1.1","timestamp" => "13/Mar/2025:12:27:22 +0000","clientip" => "10.0.0.1","linux96_user_agent" => {"device" => "Other","os_full" => "Windows 10","name" => "Chrome","os_name" => "Windows","os" => "Windows","minor" => "0","os_major" => "10","version" => "134.0.0.0","os_version" => "10","major" => "134","patch" => "0"},"auth" => "-","message" => "10.0.0.1 - - [13/Mar/2025:12:27:22 +0000] \"GET / HTTP/1.1\" 304 0 \"-\" \"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/134.0.0.0 Safari/537.36\"","bytes" => "0","request" => "/","response" => "304"

3. Logstash采集nginx日志之geoip案例

[root@elk93 ~]# cat /etc/logstash/conf.d/05-nginx-geoip-stdout.conf

input { file { path => "/var/log/nginx/access.log"start_position => "beginning"}

} filter {grok {match => { "message" => "%{HTTPD_COMMONLOG}" }}useragent {source => "message"target => "linux95_user_agent"}# 基于公网IP地址分析你的经纬度坐标点geoip {# 指定要分析的公网IP地址的字段source => "clientip"}mutate {remove_field => [ "@version","host","path" ]}

}output { stdout { codec => rubydebug }

}[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/05-nginx-geoip-stdout.conf

{"verb" => "GET","@timestamp" => 2025-03-13T12:37:05.116Z,"ident" => "-","httpversion" => "1.1","timestamp" => "13/Mar/2025:12:31:27 +0000","clientip" => "221.218.213.9","linux96_user_agent" => {"device" => "Other","os_full" => "Windows 10","name" => "Chrome","os_name" => "Windows","os" => "Windows","minor" => "0","os_major" => "10","version" => "134.0.0.0","os_version" => "10","major" => "134","patch" => "0"},"auth" => "-","message" => "221.218.213.9 - - [13/Mar/2025:12:31:27 +0000] \"GET / HTTP/1.1\" 304 0 \"-\" \"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/134.0.0.0 Safari/537.36\"","bytes" => "0","request" => "/","response" => "304"

}4. Logstash采集nginx日志之date案例

[root@elk93 ~]# cat /etc/logstash/conf.d/06-nginx-date-stdout.conf

input { file { path => "/var/log/nginx/access.log"start_position => "beginning"}

} filter {grok {match => { "message" => "%{HTTPD_COMMONLOG}" }}useragent {source => "message"target => "linux95_user_agent"}geoip {source => "clientip"}# 转换日期字段date {# 匹配日期字段,将其转换为日期格式,将来存储到ES,基于官方的示例对号入座对应的格式即可。# https://www.elastic.co/guide/en/logstash/7.17/plugins-filters-date.html#plugins-filters-date-match# "timestamp" => "23/Oct/2024:16:25:25 +0800"match => [ "timestamp", "dd/MMM/yyyy:HH:mm:ss Z" ]# 将match匹配的日期修改后的值直接覆盖到指定字段,若不定义,则默认覆盖"@timestamp"。target => "novacao-timestamp"}mutate {remove_field => [ "@version","host","path" ]}

}output { stdout { codec => rubydebug }

}[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/05-nginx-date-stout.conf {"ident" => "-","clientip" => "10.0.0.1","bytes" => "0","novacao-timestamp" => 2025-03-13T12:57:39.000Z,"httpversion" => "1.1","linux95_user_agent" => {"os_full" => "Windows 10","minor" => "0","name" => "Chrome","device" => "Other","os_name" => "Windows","major" => "134","patch" => "0","version" => "134.0.0.0","os_major" => "10","os" => "Windows","os_version" => "10"},"request" => "/","auth" => "-","verb" => "GET","message" => "10.0.0.1 - - [13/Mar/2025:12:57:39 +0000] \"GET / HTTP/1.1\" 304 0 \"-\" \"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/134.0.0.0 Safari/537.36\"","@timestamp" => 2025-03-13T12:58:43.691Z,"timestamp" => "13/Mar/2025:12:57:39 +0000","response" => "304"

}

5. Logstash采集nginx日志之mutate案例

[root@elk93 ~]# cat /etc/logstash/conf.d/06-nginx-mutate-stdout.conf

input { file { path => "/var/log/nginx/access.log"start_position => "beginning"}

} filter {grok {match => { "message" => "%{HTTPD_COMMONLOG}" }}useragent {source => "message"target => "linux95_user_agent"}geoip {source => "clientip"}date {match => [ "timestamp", "dd/MMM/yyyy:HH:mm:ss Z" ]target => "novacao-timestamp"}# 对指定字段进行转换处理mutate {# 将指定字段转换成我们需要转换的类型convert => {"bytes" => "integer"}remove_field => [ "@version","host","message" ]}

}output { stdout { codec => rubydebug }

}[root@elk93 ~]# rm -f /usr/share/logstash/data/plugins/inputs/file/.sincedb*

[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/06-nginx-mutate-stdout.conf

{"@timestamp" => 2025-03-13T13:00:17.201Z,"auth" => "-","verb" => "GET","linux95_user_agent" => {"major" => "134","name" => "Chrome","os" => "Windows","minor" => "0","version" => "134.0.0.0","device" => "Other","os_name" => "Windows","os_major" => "10","os_full" => "Windows 10","os_version" => "10","patch" => "0"},"clientip" => "10.0.0.1","path" => "/var/log/nginx/access.log","ident" => "-","timestamp" => "13/Mar/2025:13:00:14 +0000","request" => "/","novacao-timestamp" => 2025-03-13T13:00:14.000Z,"response" => "304","bytes" => 0,"httpversion" => "1.1"

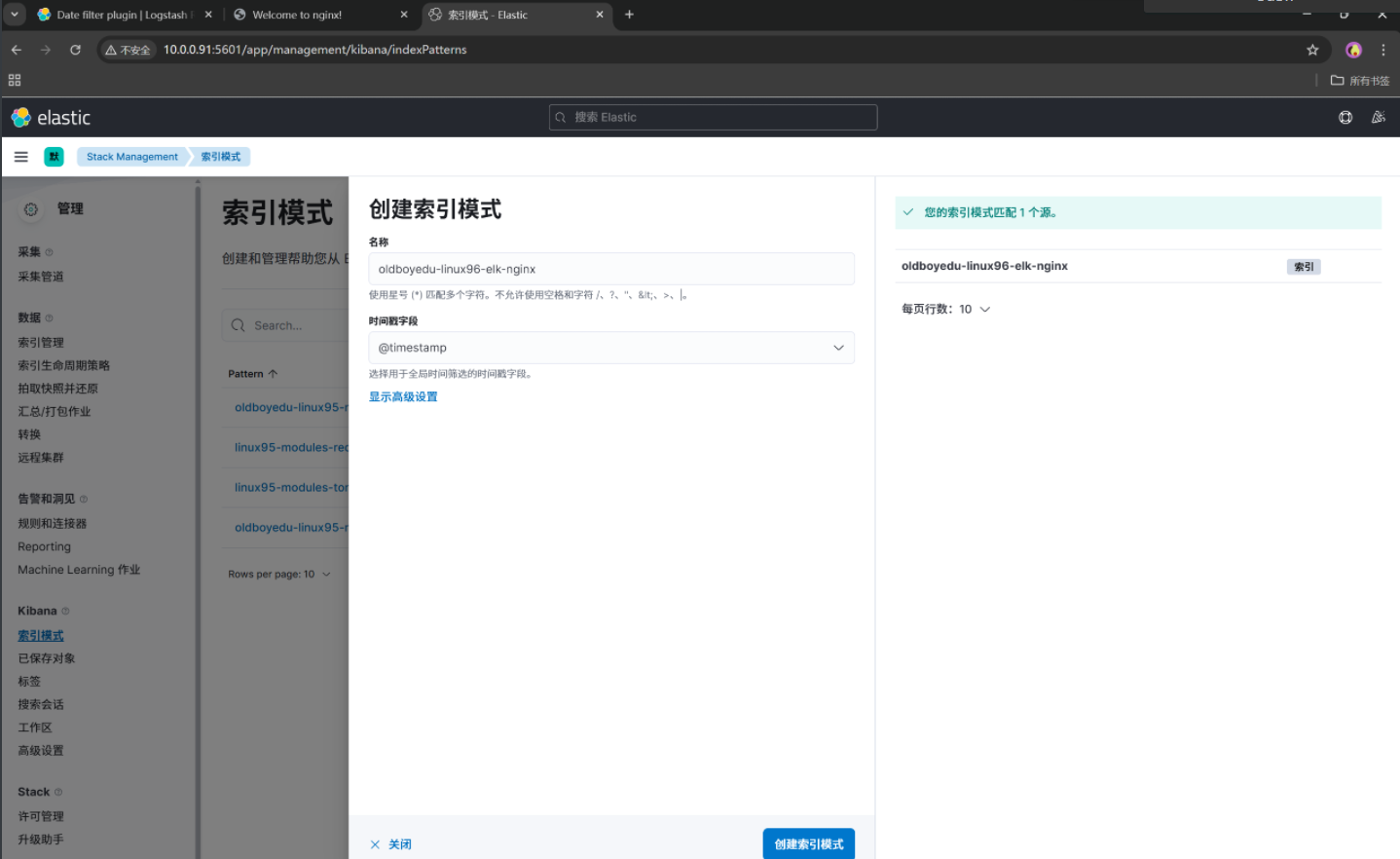

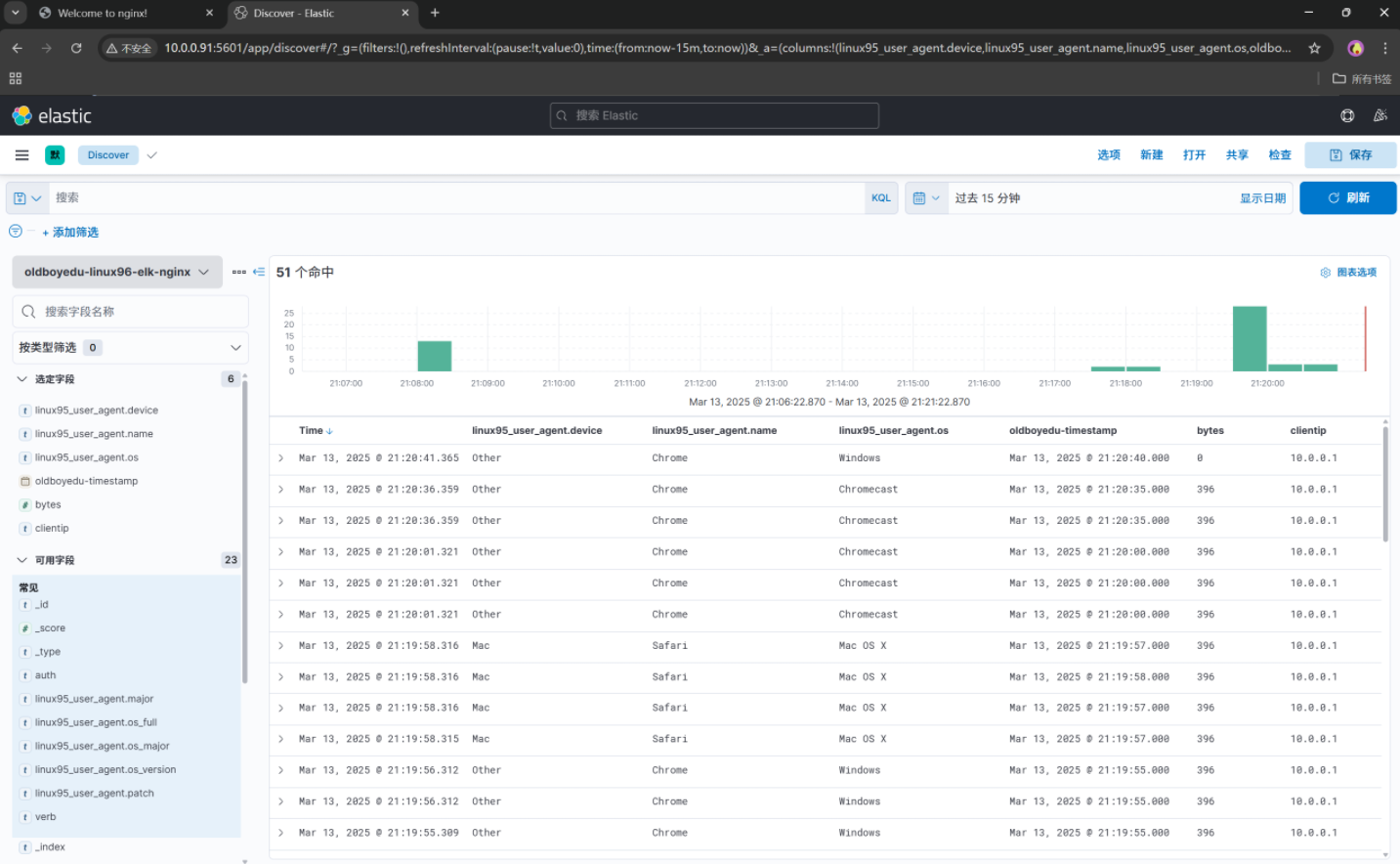

}6. Logstash采集nginx日志到ES集群并出图展示

[root@elk93 ~]# cat /etc/logstash/conf.d/07-nginx-to-es.conf

input { file { path => "/var/log/nginx/access.log"start_position => "beginning"}

} filter {grok {match => { "message" => "%{HTTPD_COMMONLOG}" }}useragent {source => "message"target => "linux95_user_agent"}geoip {source => "clientip"}date {match => [ "timestamp", "dd/MMM/yyyy:HH:mm:ss Z" ]target => "novacao-timestamp"}# 对指定字段进行转换处理mutate {# 将指定字段转换成我们需要转换的类型convert => {"bytes" => "integer"}remove_field => [ "@version","host","message" ]}

}output { stdout { codec => rubydebug }elasticsearch {# 对应的ES集群主机列表hosts => ["10.0.0.91:9200","10.0.0.92:9200","10.0.0.93:9200"]# 对应的ES集群的索引名称index => "novacao-linux96-elk-nginx"}

}[root@elk93 ~]# rm -f /usr/share/logstash/data/plugins/inputs/file/.sincedb*

[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/06-nginx-mutate-stdout.conf

存在的问题:

Failed (timed out waiting for connection to open). Sleeping for 0.02问题描述:

此问题在 ElasticStack 7.17.28版本中,可能会出现Logstash无法写入ES的情况。

TODO:

需要调研官方是否做了改动,导致无法写入成功,需要额外的参数配置。临时解决方案:

1.删除filter组件的geoip插件删除,不再添加,然后重新reload一下nginx服务,因为会把nginx的日志锁住。

2.降版本

六、总结

Logstash作为一款功能强大的开源数据处理管道工具,在数据收集、处理和传输等方面发挥着重要作用。它与Elasticsearch、Kibana等工具配合使用,能够实现高效的数据管理和分析,广泛应用于日志处理、数据监控等领域,为企业和开发者提供了有力的支持。

- logstash架构

- 多实例和pipeline

- input

- output

- filter

- 常用的filter组件

- grok 基于正则提取任意文本,并将其封装为一个特定的字段。

- date 转换日期字段

- mutate 对指定字段(的数据类型进行转换处理

- useragent 用于提取用户的设备信息

- geoip 基于公网IP地址分析你的经纬度坐标点